FUCK THE POLICE

911 EVERY DAY

too bad you don't use a mac

Yeah then he wouldn't be able to do ANYTHING and it would be so terriffic! And he'd be overpaying for parts by about 30%.

too bad you don't use a mac

vista is a great os, actuly i had more problems with xp when it released, then i did with vista. vista is so much more IT friendly too.

Yeah then he wouldn't be able to do ANYTHING and it would be so terriffic! And he'd be overpaying for parts by about 30%.

You see, anyone that has used a mac knows that this comment comes from complete ignorance. It's like a kindergartner trying to lecture you on quantum physics. you just chuckle to yourself and go "awwwwww how cayooooote."

*In my most pretentious voice*

You adorable little thing watermark, I was being condescending/patronizing....

implicit derivatives and calculating the rates of change.

This is made to sound complicated but likely it's very simple.

btw:

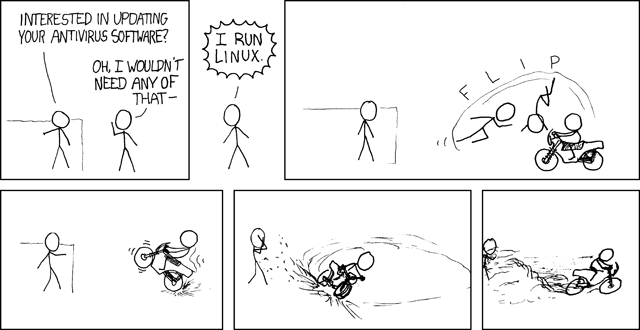

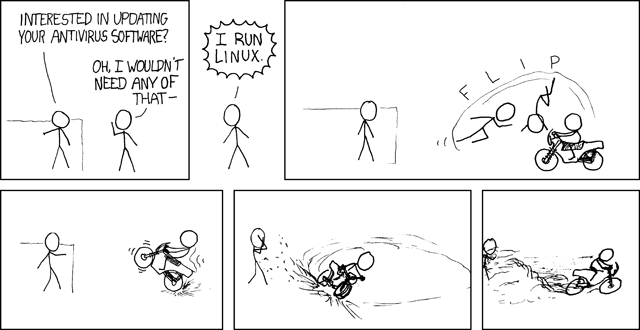

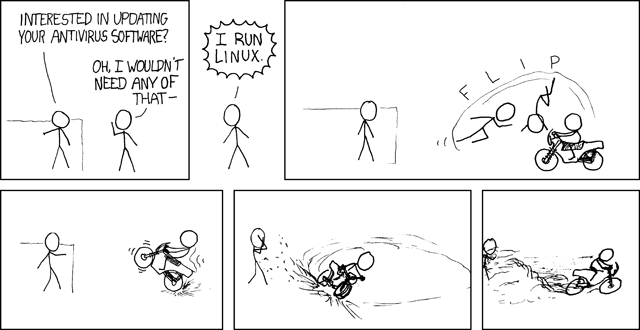

"We actually stand around the antivirus displays with the Mac users just waiting for someone to ask."

http://en.wikipedia.org/wiki/Implicit_function_theorem

Let f : Rn+m → Rm be a continuously differentiable function. We think of Rn+m as the cartesian product Rn × Rm, and we write a point of this product as (x,y) = (x1, ..., xn, y1, ..., ym). f is the given relation. Our goal is to construct a function g : Rn → Rm whose graph (x, g(x)) is precisely the set of all (x, y) such that f(x, y) = 0.

As noted above, this may not always be possible. As such, we will fix a point (a,b) = (a1, ..., an, b1, ..., bm) which satisfies f(a, b) = 0, and we will ask for a g that works near the point (a, b). In other words, we want an open set U of Rn, an open set V of Rm, and a function g : U → V such that the graph of g satisfies the relation f = 0 on U × V. In symbols,

\{ (\mathbf{x}, g(\mathbf{x})) \} = \{ (\mathbf{x}, \mathbf{y}) * f(\mathbf{x}, \mathbf{y}) = 0 \} \cap (U \times V)

To state the implicit function theorem, we need the Jacobian, also called the differential or total derivative, of f. This is the matrix of partial derivatives of f. Abbreviating (a1, ..., an, b1, ..., bm) to (a, b), the Jacobian matrix is

\begin{matrix} (Df)(\mathbf{a},\mathbf{b}) & = & \left[\begin{matrix} \frac{\partial f_1}{\partial x_1}(\mathbf{a},\mathbf{b}) & \cdots & \frac{\partial f_1}{\partial x_n}(\mathbf{a},\mathbf{b})\\ \vdots & \ddots & \vdots\\ \frac{\partial f_m}{\partial x_1}(\mathbf{a},\mathbf{b}) & \cdots & \frac{\partial f_m}{\partial x_n}(\mathbf{a},\mathbf{b}) \end{matrix}\right*\left. \begin{matrix} \frac{\partial f_1}{\partial y_1}(\mathbf{a},\mathbf{b}) & \cdots & \frac{\partial f_1}{\partial y_m}(\mathbf{a},\mathbf{b})\\ \vdots & \ddots & \vdots\\ \frac{\partial f_m}{\partial y_1}(\mathbf{a},\mathbf{b}) & \cdots & \frac{\partial f_m}{\partial y_m}(\mathbf{a},\mathbf{b})\\ \end{matrix}\right]\\ & = & \begin{bmatrix} X & * & Y \end{bmatrix}\\ \end{matrix}

where X is the matrix of partial derivatives in the x's and Y is the matrix of partial derivatives in the y's. The implicit function theorem says that if Y is an invertible matrix, then there are U, V, and g as desired. Writing all the hypotheses together gives the following statement.

Let f : Rn+m → Rm be a continuously differentiable function, and let Rn+m have coordinates (x, y). Fix a point (a1,...,an,b1,...,bm) = (a,b) with f(a,b)=c, where c∈ Rm. If the matrix [(∂fi/∂yj)(a,b)] is invertible, then there exists an open set U containing a, an open set V containing b, and a unique continuously differentiable function g:U → V such that

\{ (\mathbf{x}, g(\mathbf{x})) \} = \{ (\mathbf{x}, \mathbf{y}) * f(\mathbf{x}, \mathbf{y}) = \mathbf{c} \} \cap (U \times V).

This is made to sound complicated but likely it's very simple.

btw:

"We actually stand around the antivirus displays with the Mac users just waiting for someone to ask."